Yesterday (13th April 2026) I got a chance to listen to the one and only, Prof. Barbara Oakley, well known from the famous “Learning How to Learn” course. To be completely 100% honest, I never studied the course, but I’ve heard overwhelmingly positive responses since I was a kid. So that’s why I joined the class.

Learning how to actually learn is undoubtedly the most important skill to have in 2026, because knowledge is easier than ever to capture. As well as the powerful large language models that can quickly aggregate the subject of your interest and do awesome things with it (guiding, explaining, visualizing, metaphoring, you name it!)

Without further ado, let’s see what I learned from the special lecture

Building minds in the age of AI, Why smarter technology is making us dumber

The 2 system

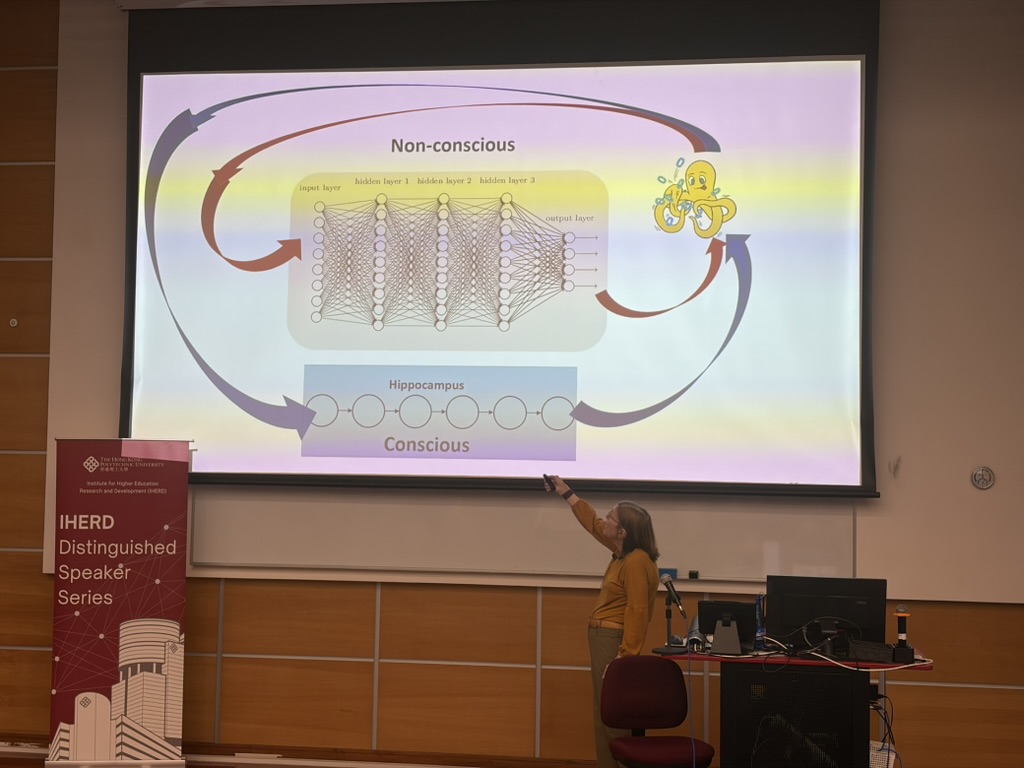

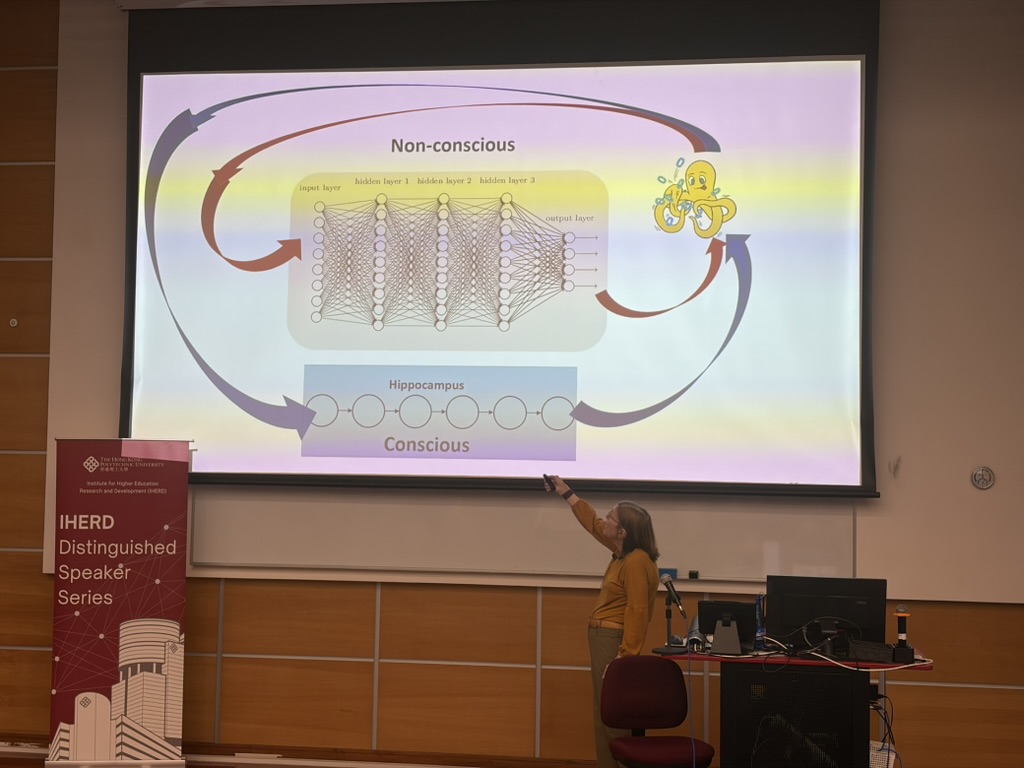

To first understand how we can build great minds, we have to quickly understand how our mind (brain) works. In the book by Daniel Kahneman, the brain is comprised of two systems

- The Quick, Intuition, and Non-Conscious brain

- The Slow, Thinking, and Conscious brain

The systems work and support each other. We can’t use the slow brain to do everything since it’s, well, slow, you cannot play any sport by thinking! You need the quick response. The same goes for the fast brain, you cannot automatically solve complex problems based on your intuition, you need the thinking brain!

That is a really quick summary of how the brain works, but if you want a more in-depth explanation from someone much smarter than me, I highly suggest the video by Veritasium that explains this concept so well https://www.youtube.com/watch?v=UBVV8pch1dM&t=233s

ChatGPT = human Brain?

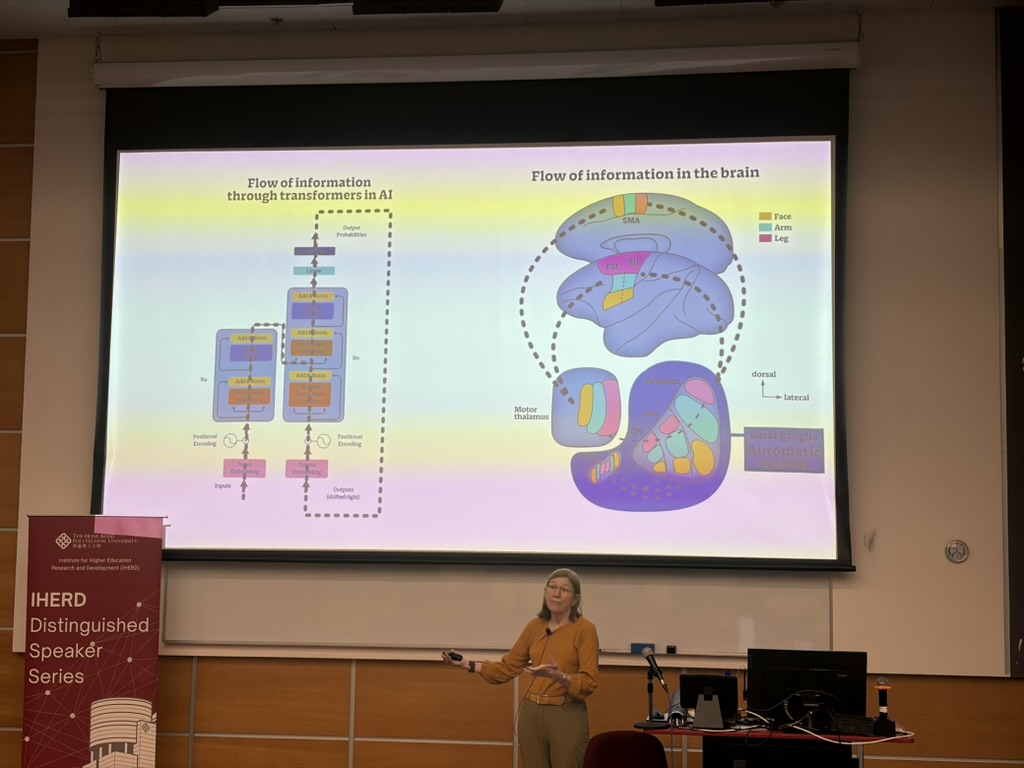

If you have been following AI and LLMs, you may have heard of the highly praised paper “Attention is All You Need” — it’s the paper that introduced us to the Transformer architecture, which is the foundation for today’s pretentiously sophisticated chatbots, and quite frankly, it’s modeled similarly to how our brain operates.

quick checklist on what we got the same

- encoding and decoding part

- attention and weighting

- neural network and our neurons

- nobody really understands why it’s smart

It’s wonderful when you pay attention to how technology mimics nature, from natural stripes in camouflage to the brain itself

Again, if you have messed around with Deep Learning in any form. The way this system becomes smart is basically data, a large amount of DATA. As the most cliché analogy goes, Deep Learning / Neural network is a blackbox, you put data in, it adjusts the parameters by itself, and gets better

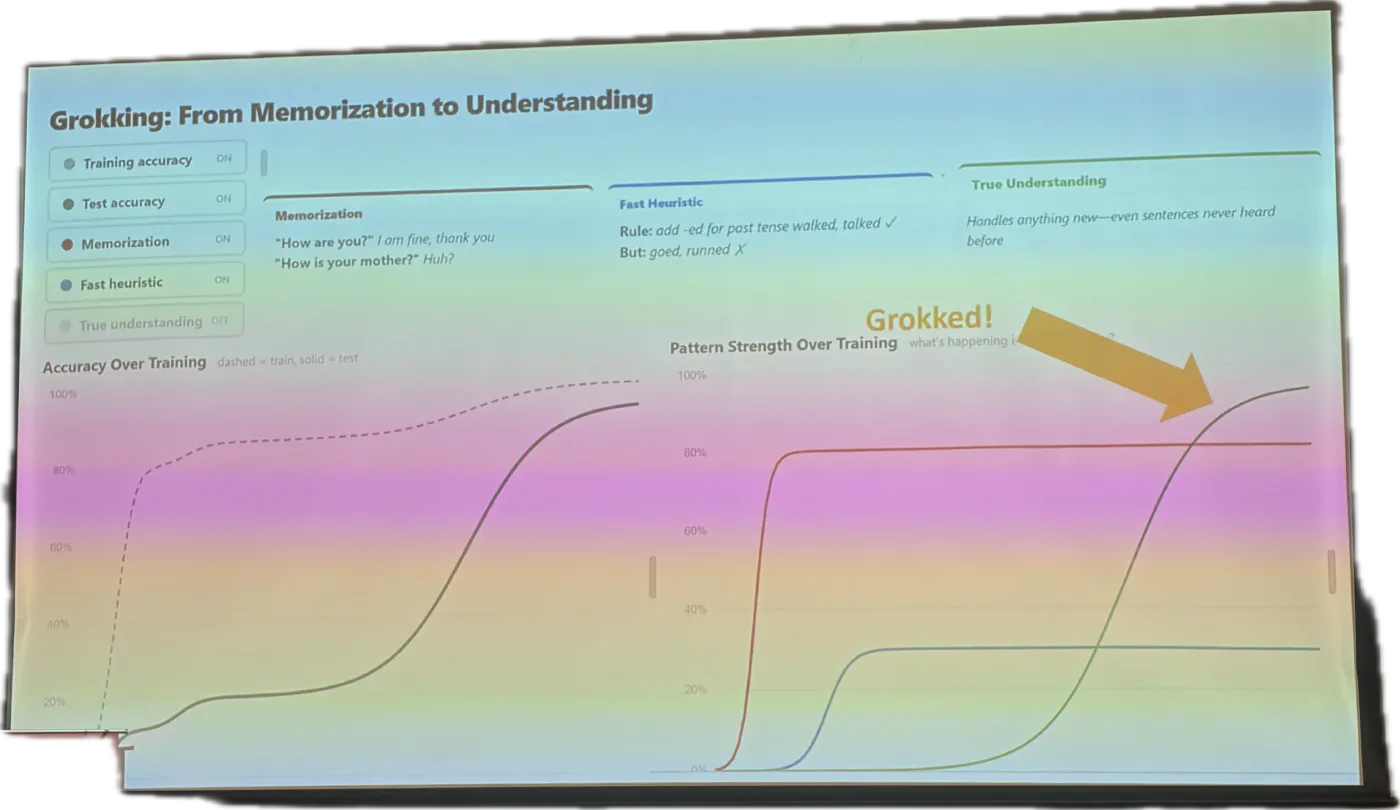

When we’re downloading the data to train our model, we have 2 sets of data: Training and Testing. When DL models first learn on the training data, they operate well when tested on the training data, but if we try to use the test data, it underperforms tremendously. It was until the training reached a certain threshold when our model reached its capability and it can operate well on the testing data

Similarly, our brain operates very similarly and we call that threshold of jumping “Grokking”

No it’s not,

The truth is, the similarity only finishes in the quick system of the brain, by having a lot of data, training it, adjusting parameters until it fits.

Similar to humans when we use the quick brain. When we’re using our motor skills, let’s say football, you basically cannot ronaldo on your first day. Most likely you can’t even juggle, but after a series of practice and adjusting your body little by little, you will become better

But our brain also has the slow system, and this is where everything is different. The slow system does not work better by adjusting everything, it works better by recalling the systems it already has remembered. I’ll put it in an analogy Prof. Barbara explained in the lecture: when we’re learning something new it’s like constructing a chain in the brain, and when it’s first constructed, it will have weak links and might dissolve later, but if you try to recall it, pulling the chain repeatedly, it will slowly become better and better

Also as a bonus, when the Slow brain learns something for so long, it will pick up the simpler underlying pattern, which then can translate into the fast brain. These underlying patterns that DISTILL into the automatic system are why senior doctors’ intuition tends to be correct

Model fitting our brain

So far we established that we have 2 brain systems, our brain is basically a deep learning model, and the deep learning model has a certain threshold for it to become really smart, and we call that GROKKED. As well as the brain operates better by recalling in the slow system, training it until it becomes grokked.

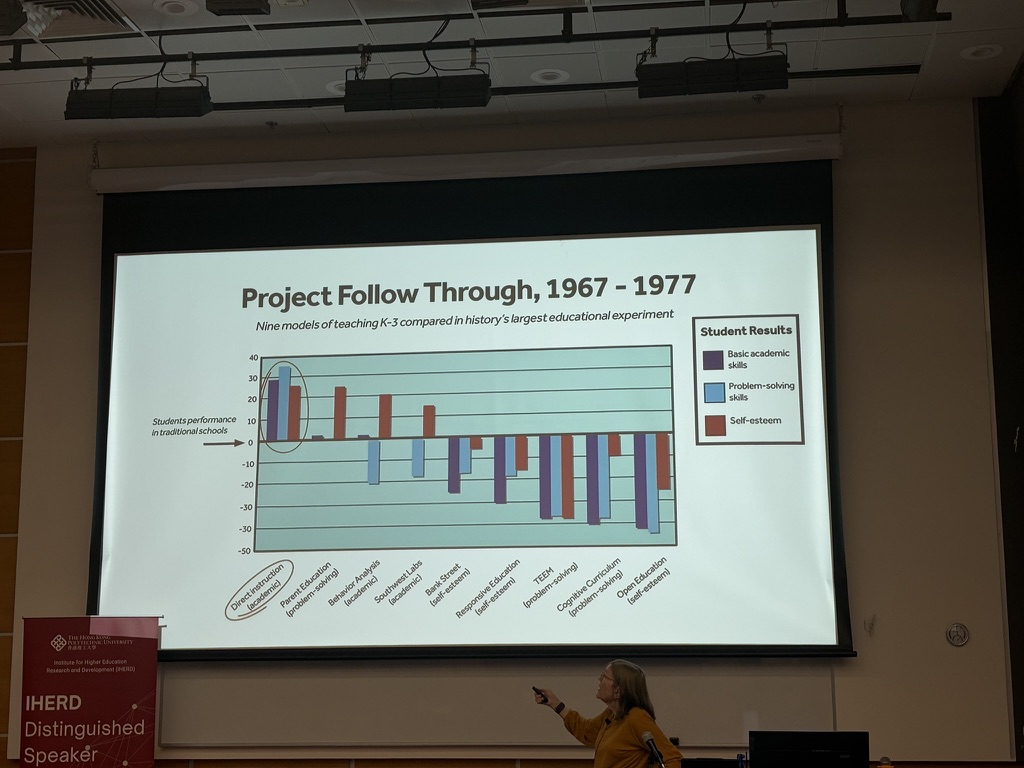

Another thing Barbara mentioned is the importance of instructed learning. AI gets way better when it was instructed in their training dataset, and it’s also the same for humans, as in this research showing that direct instruction is the best method of teaching

So, as a good student, I would like to use this lecture I have learned and apply it to the testing data, and create my learning to learn 2026 with AI guide, with the core ideology of leveraging AI tools for learning. As you mentioned, AI shows the knowledge, but we’re the ones who own it~

- Instruction: Before learning any topic, I try to research the best course to learn with, how I should structure my learning, and also let AI guide me through the study plan

- Training: As I mostly take all my notes on my laptop in a Zettelkasten system, I already have a system that can store all my knowledge. However the system is mostly stale since I take notes and leave them as is. Now I try to quiz myself with AI on all the notes I took weekly, as well as learning more new things here. So both creating new links and strengthening the old ones. Also trying to keep it at 85% Baseline

- Testing: to put it into practice, I try to write a blog on new topics I encounter weekly, so I put myself through a test and see if what I studied has been grokked or not

Leaving notes

One last thing I wanted to point out, I have so many things I wanted to learn but I never tried to put in real effort in my learning. But your lecture has significantly changed my mind. I would love to know more about learning to learn, and start learning all the things that have been stuck in my head!

Truly inspiring, I try my best to upload this blog, even though this one really sucks, and keep improving from now on.

Thank you Barbara for being such a wonderful person. I truly learn a lot from you

Best, Kiwi 14/4/2026